How NetRise Uses Knowledge Graphs to Identify Components in SBOMs

NetRise developer Ivan Pointer discusses components, their relationships, & how NetRise uses knowledge graphs to build SBOMs.

What is a Component

To set the stage, we need to first touch on what a component is and how component relationships work.

Simply put, a component is any part of software that contributes to the whole; a component can be an embedded library within an executable, a plain text file, an archive, a package, or even an implied dependency, such as an entry in a package manifest file. Without getting into function hashing, it is the most basic building block within the software; the main focus of various SBOM formats, such as CycloneDX and SPDX.

By “piece of software”, we mean any arbitrary asset that can be submitted to NetRise’s platform. This could be a firmware image, a software package, an archive, a simple file, or even a docker image.

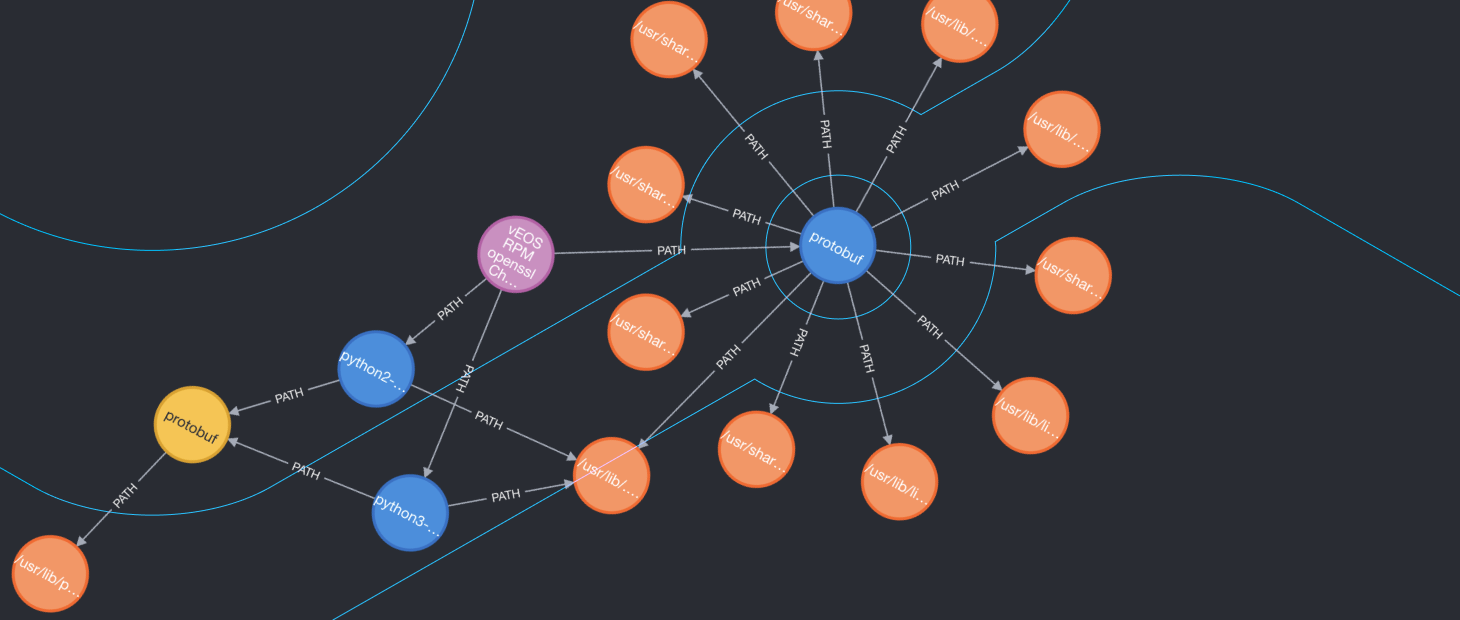

Within this software (and abroad), components have relationships of various kinds between each other. It is this collection of components and their relationships that come together as a knowledge graph.

A Component Knowledge Graph

Let’s explore a few of the different kinds of relationships between components.

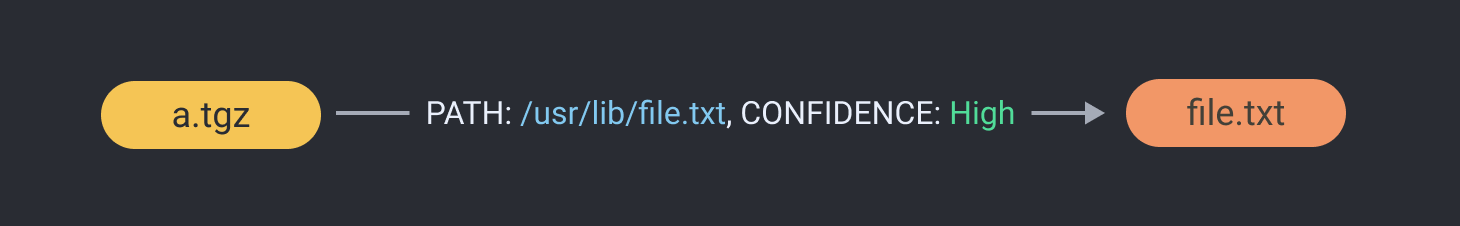

Path Relationships

Perhaps the simplest relationship that can be represented within a component knowledge graph is the containment of one component within another. This is represented with what we call a PATH edge:

With this relationship, we see that the archive a.tgz contains the component file.txt at the path /usr/lib/file.txt

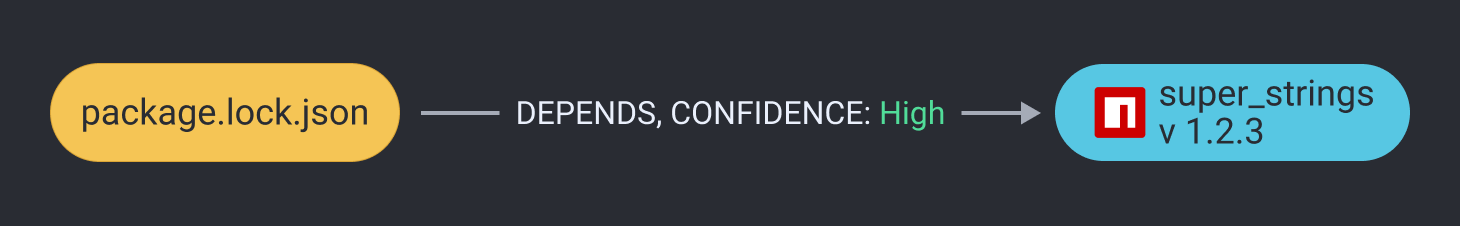

Dependency Relationships

The next common relationship found within a piece of software is an expression of dependency between one component and another. This can be either a concrete dependency, such as:

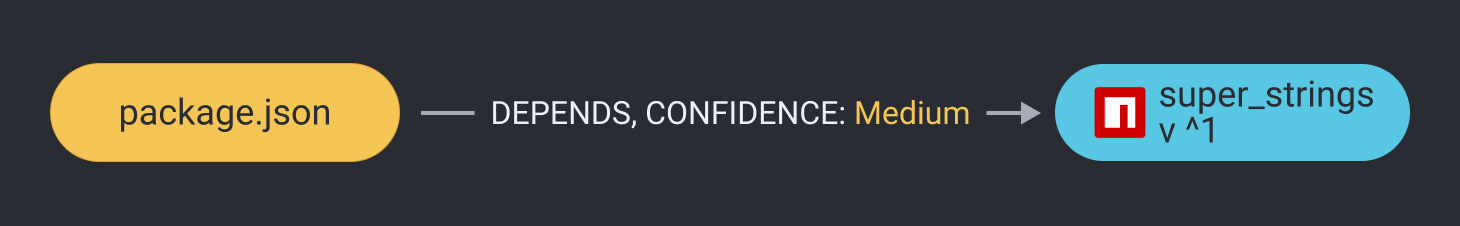

Or an implicit one:

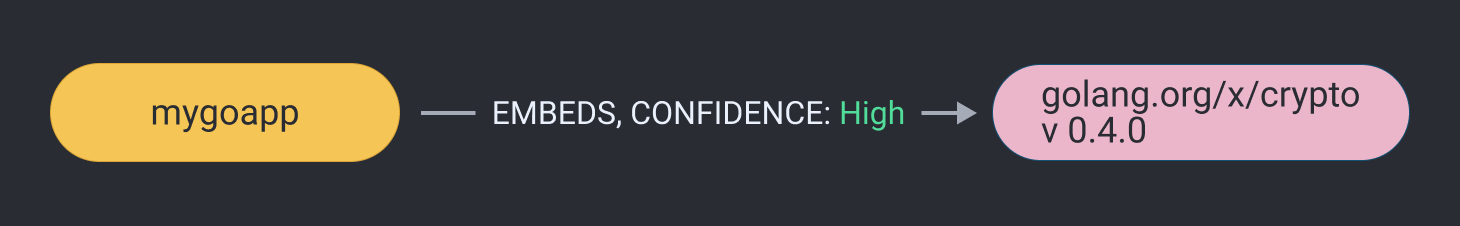

Embedded Relationships

Another form of relationship comes from embedded dependencies, such as in this golang package:

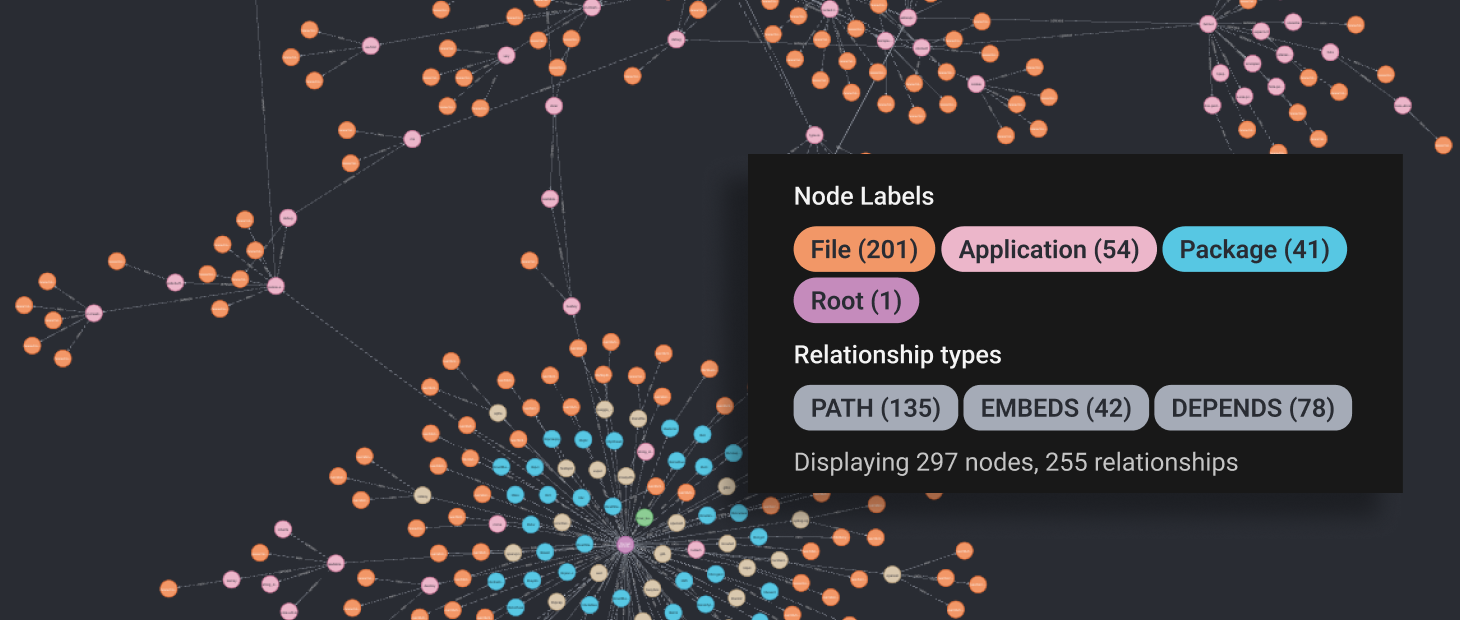

All Together

Put all together, these components and their relationships take the form of a directed acyclic graph, which can be represented like this:

Now, let’s dig in!

Building the Graph

To build the graph of components within an analyzed piece of software we use a 2-phased approach.

First, we collect all the components and relationships we find using a variety of methods. This first phase is called “Projection”.

Next, we refine the graph, distilling it to the simplest representation of itself without sacrificing any detail, accuracy, or confidence. This is called “Correction”.

Let’s explore these two phases in a little more detail.

Projection

During projection, we use a number of different methods to identify each component and relationship within the software:

-

Heuristics - We first look at each file within the software, looking for things such as file type, size, contents, manifests, location, and others, using a proprietary rules engine to identify various components.

-

Package Manifests - Next, we parse a wide array of package manifests to identify components, such as NPM, PYPI, Maven POM, and others. We look at operating system managers such as DEB and RPM as well.

-

Language Specific Tooling - We leverage tools specific to certain programming languages and ecosystems to identify more components.

-

Unique Hashes - We check the checksum of each file against our internal database of known components based on hashes, which we collect from official, authoritative sources.

-

Function Hashing - Using machine learning (ML), we hash functions of various binaries that are found within the software, running these hashes through our trained model as another way to identify components.

-

Other Low Confidence Identification Methods - Lastly, we implement various low-confidence identification methods related to file system artifacts, to ensure that we include as much relevant and useful information as we can, in the resulting graph.

Correction

Having collected everything we could from the analyzed piece of software, we refine the graph down to its most potent form without sacrificing information or confidence. These steps include:

-

Election and Deduplication - It is common for a component to be found multiple times through different methods, with various levels of confidence. During this step, we refine all of the duplicate findings into a single component, carrying the aggregation of all the information of the various findings, leaving only the highest confidence entry.

-

Dependency Resolution - During this step, we look for concrete versions of components that match version expressions in the various package manager manifests, such as NPM’s

package.json. Once found, we further verify the relationship by looking at various other information such as location, source and type, before adding the relationship to the graph. -

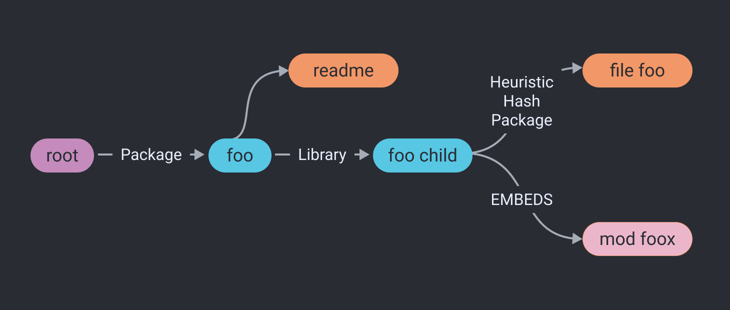

Sorting - Toward the end of the correction process, we sort the dependencies to make sure the graph is represented in a sane manner. Take, for example, a package, a library, and a file. Before sorting, both the package and the library could point directly to the file, hiding the relationship between the package and the library. This misleading relationship would read as: “both the library and the package have this file.” Sorting rearranges the relationship between the three, to accurately represent the relationship between the package, library, and file. After sorting, this same relationship would read as: “the package has the library, which has the file.”

After Component Identification

Having created a complete and refined component knowledge graph of the piece of software, we enrich the findings with more valuable information based on what we found. This enrichment includes known vulnerabilities, broken certificates, cracked passwords, risky IP addresses, and others. We then perform analytics and reporting on the enriched data, preparing the results for our users, resulting in the NetRise Enhanced SBOM.

Stay up to date with the news

Sign Up To Get Our Free Insights Delivered To Your Inbox

Recent Press Releases

Stay up to date with the latest official announcements and corporate milestones from NetRise.